Replicate PostgreSQL to BigQuery in minutes

PostgreSQL is a widely used open-source relational database management system but sometimes it requires huge efforts and expertise to run analytics queries within the desired time. However, to track all the user events in a timely and safe manner, and let business users query this data in any way possible, moving your data from PostgreSQL to BigQuery is the right choice. Moving your PostgreSQL to BigQuery doesn’t have to be complex or expensive, Daton simplifies the process to reduce your spending and minimizes the time it takes to deliver value for all your PostgreSQL data. Let’s see how!

In this blog, we will demonstrate how to replicate your PostgreSQL data to BigQuery with best practices, while ensuring data integrity.

Why integrate PostgreSQL to BigQuery?

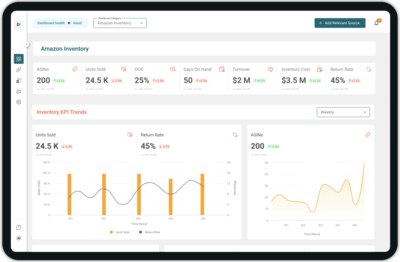

A lot of times, there is a need to internally move and transform data between multiple data stores. If data is scattered around different information systems, it is hard for a business user to analyze it and make sense of it. Integrate your PostgreSQL data to BigQuery for centralizing all data sources, which will help you to view a consolidated version of the data. Moving PostgreSQL data to BigQuery will further allow the departments to create detailed dashboards that can act as a single source for all customer information from different platforms.

PostgreSQL Overview

PostgreSQL is an advanced, enterprise-class, and open-source relational database system. It supports both SQL (relational) and JSON (non-relational) querying. PostgreSQL is a highly stable database backed by more than 20 years of development by the open-source community. It’s known for its stability and its ability to handle high volumes of transactions. PostgreSQL is used as a primary database for many web applications as well as mobile and analytics applications.

BigQuery Overview

Google BigQuery is a cloud-based data warehouse service introduced by Google. It is a REST-based web service that allows you to run complex analytical SQL-based queries under large sets of data. Additionally, BigQuery is a serverless, highly scalable, and cost-effective multi-cloud data warehouse designed for business agility. Its fast deployment cycle and on-demand pricing make it one of the highly accessible and popular data warehouses.

How to replicate PostgreSQL to BigQuery?

Here’s an overview of the two approaches you can use to replicate PostgreSQL data to BigQuery. This will allow you to evaluate the pros and cons of both and choose the one that best suits your requirement.

Build your own data pipeline

This process needs a lot of experience and consumes a lot of time and manpower. The chances of errors are more due to multiple integrated steps to be executed one after the other. You need to extract data using PostgreSQL APIs & then connect it properly with the BigQuery data warehouse. This whole process to build a custom data pipeline requires regular intervention that makes it cumbersome.

Use Daton to integrate PostgreSQL and BigQuery

Integrating PostgreSQL and BigQuery with Daton is the fastest & easiest way to save your time and effort. Leveraging an eCommerce data pipeline like Daton significantly simplifies and accelerates the time it takes to build automated reporting.

Configuring data replication on Daton only takes a few minutes and a few clicks. Your analysts do not have to write any code or manage any infrastructure, yet you can get access to PostgreSQL data in a few hours.

Daton’s simple and easy-to-use interface allows analysts and developers to use UI elements to configure data replication from PostgreSQL data into BigQuery.

Daton takes care of:

- Authentication

- Rate limits

- Sampling

- Historical data load

- Incremental data load

- Table creation, deletion, and reloads

- Refreshing access tokens

- Notifications

and many more important functions for data analysts to focus on analysis rather than worrying about the data replication.

Steps to integrate PostgreSQL with Daton

- Sign in to Daton

- Select PostgreSQL from the integrations page

- Provide Integration Name, Replication Frequency, and History. Integration name would be used in creating tables for the integration and cannot be changed later

- You will be redirected to PostgreSQL login for authorizing Daton to extract data periodically

- Post successful authentication, you will be prompted to choose from the list of available PostgreSQL accounts

- Select required tables from the available list of tables

- Then select all required fields for each table

- Submit the integration

For more information, visit PostgreSQL Connector.

Sign up for a trial of Daton Today!

Here are more reasons to explore Daton for PostgreSQL to BigQuery Integration

- Faster integration – PostgreSQL to BigQuery is one of the integrations Daton can handle very conveniently and seamlessly. By following a few steps you can easily connect PostgreSQL to BigQuery.

- Low Effort & Zero Maintenance – Daton automatically takes care of all the data replication processes and infrastructure once you sign up for a Daton account and configure the data sources. No need to manage infrastructure or write manual code.

- Data consistency guarantee and an incredibly friendly customer support team ensure you can leave the data engineering to Daton and focus on analysis and insights!

- Enterprise-grade data pipeline at an unbeatable price to help every business become data-driven. Get started with a single integration today for just $10 and scale up as your data needs grow.

- Robust Scheduling Options – This allows you to schedule jobs based on their requirements using a simple configuration step.

- Support for all major cloud data warehouses including Google BigQuery, Snowflake, Amazon Redshift, Oracle Autonomous Data Warehouse, PostgreSQL, and more.

- Flexible loading options allow you to optimize data loading behavior to maximize storage utilization and ease of querying.

- Enterprise-grade encryption gives your peace of mind

- Support for 100+ data sources – In addition to PostgreSQL, Daton can extract data from a varied range of sources such as Sales and Marketing applications, Databases, Analytics platforms, Payment platforms, and much more.

With Daton as a part of your infrastructure, your analytics and output will always reflect the most relevant metrics in the fastest way possible.

For all sources, check our data connectors page.

Other Articles by Saras Analytics,