If you’ve come here, you are probably looking for a way to transfer data from Customer.io to Google BigQuery quickly. In this article, we talk about why email automation services like Customer.io is essential and how you can get access to this data on your data warehouse without having to write any code.

The choice for eCommerce business when it comes to marketing and selling their merchandise is growing every day. eCommerce vendors have to decide on what channels they want to sell on, which channels they want to spend their advertising dollars on, whether the channels include:

- Branded websites

- In some cases branded eCommerce sites per country

- Marketplaces

- In many instances, marketplaces per country

- Retail stores

- To create an omnichannel presence and to engage buyers where the shop

Complexity increases with the addition of every sales channel. For instance, if we consider marketing channels available to support online business, you will find a choice of:

- Social Media ads – Some platforms include Google Ads, Instagram, LinkedIn, Twitter, and others

- Digital ads and remarketing – Criteo, Taboola, Outbrain, and others

- PPC – Google Ads, Bing ads, and others

- Email – Mailchimp, Klaviyo, Hubspot, Customer.io and others

- Podcasts

- Affiliate – Refersion, CJ Affiliates

- Influencer marketing

- Offline marketing

Choice, while being a great virtue, leads to complexity and this complexity when not managed properly, can, in turn, impact the efficiency of running an eCommerce business. Most eCommerce businesses grapple with this complexity; some well and many not so well.

In a competitive digital landscape that we live in, it has become imperative that eCommerce businesses of all sizes that aspire to grow and stay profitable have to look into their data deeply and leverage this for growth.

With the increase in competition, eCommerce Companies should strive to be more data-driven for various reasons. Some of these reasons include.

- understanding the balance between demand and supply

- understanding customer lifetime value (LTV)

- Segmenting customer base for effective marketing

- finding opportunities to reduce wasteful spend

- optimizing digital assets to maximize revenue for the same marketing spend,

- improving ROIs on Ad campaigns and

- offering an engaging and seamless experience for customers in every channel that the customer engages with the brand.

Businesses these days need to be efficient in terms of their data analysis. They are struggling to make sense of the data generated from various applications and tools used to manage different processes efficiently.

Due to the reasons highlighted above, any eCommerce business typically operates at least 10-15 different software/platforms to deliver on their customer expectations. As a result, data silos are created, which makes it more difficult to consolidate data and use the data for reporting, operations, analysis, and taking informed forward-looking decisions.

Email Marketing Automation Tools like Customer.io generate data like open rates, contact tracking, clicks, contact list, email campaign details, events and much more. All of this data needs to be analyzed along with product demand, and user behaviour data to reduce losses. It thus becomes essential for businesses to tally the data coming from Shopify eCommerce platform along with data generated from other apps and tools such as customer support platforms, website, inventory management, payment gateways, CRMs. Moreover, there may be multiple data silos for each app and tool, and all of this data needs to be analyzed to have a complete understanding of the business and identify areas of improvement.

These silos make an analysis of the entire business data comprehensively, challenging. Data Savvy eCommerce businesses try to reduce the effort of reporting and analysis by integrating data from all these channels into a cloud data warehouse like Google BigQuery. By taking this step, the process of reporting and analysis becomes easy, inexpensive, and consequently done more frequently.

In this post, we will be looking at methods to replicate data from Customer.io to Google BigQuery.

Before we start exploring the process involved in data transfer, let us spend some time looking at these individual platforms.

Customer.io Overview

Customer.io is a B2C solution that is designed to send all types of messages across all platforms, connecting emails to mobile phones. It will help you to observe how clients are interacting with the emails. When clients open or read your email and click on an enclosed link, you will get notifications. This data on customer interaction will enable you to understand how relevant your messages are. It also helps in sending engaging newsletters which attract ample attention. Customer.io can be used to send a specific email on the particular behaviour of a client. These emails are automatically triggered whenever the software observes a specific action.

Moreover, it collects relevant analytics to study market trends. More than 900 customers use Customer-io to make uninterrupted and seamless interaction with their clients. The software is capable of managing communication in large organizations. Its users enjoy the following benefits:

- Seamless workflow building and management.

- Automating email drip campaigns

- Extremely flexible and easily integrates with other services.

- Robust email builder.

- Exporting is very easy and quick.

- Webhook makes it easy to send and receive data in event-driven contexts.

Google BigQuery Overview

Google BigQuery is the first genuinely serverless data warehouse-as-a-service offering in the market. There is no infrastructure to manage, no patches to apply, or any upgrades to be made. The role of a database administrator in a Google BigQuery environment is to architect the schema and optimize the partitions for performance and cost. This cloud service automatically scales to fulfil the demands of any query without the need for intervention by a database administrator. Google BigQuery service also introduced an unusual pricing model that is based not on the storage capacity or the compute capacity needed to process your queries. Instead, the pricing relies on the amount of data processed by the incoming queries.

The best part about Google BigQuery is that you can load data to the service and start using the data immediately. Users no longer have to worry about what runs under the hood because the implementation details are hidden from them. All you need is a mechanism to load data into Google BigQuery and the ability to write SQL queries. By making data warehousing so simple, Google BigQuery has truly revolutionized the cloud data warehousing space and has put the power back in the hands of the analysts.

It is good practice to understand the architecture of Google BigQuery. Understanding the architecture helps in controlling costs, optimizing query performance, and optimizing storage. The factors that govern Google BigQuery Pricing are Storage and Query Data Processed.

For more information, visit Customer.io Connector.

Why Do Businesses Need to Replicate Customer.io to Google BigQuery?

Let’s take a simple example to illustrate why data consolidation from Customer.io to Google BigQuery can be helpful for an eCommerce business.

An e-commerce company selling in multiple countries is running campaigns on Customer.io. They have different selling platforms, payment gateways, inventories, logistic channels and target audience in each country. Emailers are running off a product which might no longer be in stock, or might not be deliverable in the location which it is running, rendering these ads as redundant and thus causing a substantial loss for the company. Now when the decision-makers want to rectify this and optimize the Customer.io Ad campaigns to maximize ROIs, they are faced with the following problems.

- There are separate data silos for inventory data, logistics data, which need to be separately downloaded and compared and updated regularly to optimize the Customer.io campaign.

- Again if you remarket effectively, then people who have not completed payments, or have encountered a failed transaction need to be targeted in addition to people who have added products to their cart, wishlists or favourites. People who have responded to other marketing campaigns like email, SMS, social media marketing also need to be targeted. So again separate data silos from various selling platforms, payment gateways, marketing tools need to be downloaded, analyzed and compared.

- Audience profiling data from e-commerce platforms, CRMs, customer support systems need to be analyzed to optimize audience targeting.

- While calculating profits/losses of the overall business, it becomes a nearly impossible task to pull all of these data from multiple platforms for each country separately, and then analyze all of this data together with the expense data and calculate profits. It involves a lot of working hours which costs money, and there is usually a time lag involved, which reduces the accuracy of the analysis and its effectiveness as the data analysis does not happen in real-time.

- The compilation and processing of data from multiple sources for thorough research is a considerable challenge if carried out manually.

- Additionally, and more importantly, hardly any company runs advertising merely on Email marketing Platforms like Customer.io. Marketers use multiple marketing channels to take the brand message out to the public. Data consolidation is essential to understand the true ROI of campaigns across all the marketing channels, whether the process is manual or not.

For these reasons, top companies consolidate all of their data from Customer.io and other apps and tools into a data warehouse like Google BigQuery to analyze the data and generate reports at a rapid pace.

The more data you can gather and use from different sources in your Customer.io campaign, the more your ad delivery is optimized. All these data can not be natively transmitted. Such data must be collected and analyzed correctly in a data warehouse before you use the relevant information to run ad campaigns on Customer.io.

Replicate data from Customer.io to Google BigQuery

There are two board ways to pull data from any source to any destination. The decision is always a build vs buy decision. Let us look at both these options to see which option provides the business with a scalable, reliable, and cost-effective solution for reporting and analysis of Customer.io data. You can also retrieve the data from Google BigQuery any time you want.

Use a cloud data pipeline

Building support for APIs is not only tedious but it is also extremely time-consuming, difficult, and expensive. Engaging analysts or developers in writing support for these APIs takes away their time from more revenue-generating endeavours. Leveraging an eCommerce data pipeline like Daton significantly simplifies and accelerates the time it takes to build automated reporting. Daton supports automated extraction and loading of Customer.io data into cloud data warehouses like Google BigQuery,Snowflake, Amazon Redshift, and Oracle Autonomous DB.

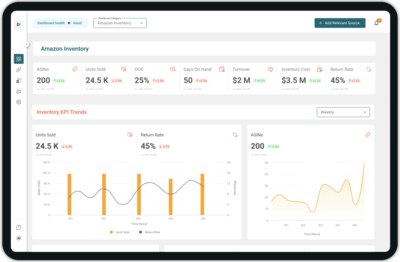

Configuring data replication on Daton on only takes a minute and a few clicks. Analysts do not have to write any code or manage any infrastructure but yet can still get access to their Customer.io data in a few hours. Any new data is generated is automatically replicated to the data warehouse without any manual intervention.

Daton supports replication from Customer.io to a cloud data warehouse of your choice, including Google BigQuery. Daton’s simple and easy to use interface allows analysts and developers to use UI elements to configure data replication from Customer.io data into Google BigQuery. Daton takes care of

- authentication

- rate limits,

- Sampling,

- historical data load,

- incremental data load,

- table creation,

- table deletion,

- table reloads,

- refreshing access tokens,

- Notifications

and many more important functions that are required to enable analysts to focus on analysis rather than worry about the data that is delivered for analysis.

Daton – The Data Replication Superhero

Daton is a fully-managed, cloud data pipeline that seamlessly extracts relevant data from many data sources for consolidation into a data warehouse of your choice for more effective analysis. The best part analysts and developers can put Daton into action without the need to write any code.

Here are more reasons to explore Daton:

- Support for 100+ data sources – In addition to Customer.io, Daton can extract data from a varied range of sources such as Sales and Marketing applications, Databases, Analytics platforms, Payment platforms, and much more. Daton will ensure that you have a way to bring any data to Google BigQuery and generate relevant insights.

- Robust scheduling options allow users to schedule jobs based on their requirements using simple configuration steps.

- Support for all major cloud data warehouses including Google BigQuery, Snowflake,Amazon Redshift, Oracle Autonomous Data Warehouse, PostgreSQL, and more.

- Low Effort & Zero Maintenance – Daton automatically takes care of all the data replication processes and infrastructure once you sign up for a Daton account and configure the data sources. There is no infrastructure to manage or no code to write.

- Flexible loading options allow you to optimize data loading behaviour to maximize storage utilization and also easy querying.

- Enterprise-grade encryption gives your peace of mind

- Data consistency guarantee and an incredibly friendly customer support team ensure you can leave the data engineering to Daton and focus instead of analysis and insights!

- Enterprise-grade data pipeline at an unbeatable price to help every business become data-driven. Get started with a single integration today for just $10 and scale up as your demands increase.

Interested in learning more about data warehouses, their architecture, and how they are priced? For all sources, check our data connectors page.

We Saras Analytics, can help with our eCommerce-focused Data pipeline (Daton) and custom ML and AI solutions to ensure you always have the correct data at the right time. Request a demo and envision how reporting is supercharged with a 360° view.

Other Articles by Saras Analytics,